Storage Not Found NfsFile28 Please Try Again Gitlab

This browser is no longer supported.

Upgrade to Microsoft Edge to take advantage of the latest features, security updates, and technical back up.

Troubleshoot Azure Data Manufactory and Synapse pipelines

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

This article explores common troubleshooting methods for external command activities in Azure Data Manufacturing plant and Synapse pipelines.

Connector and copy action

For connector issues such as an meet error using the copy activity, refer to the Troubleshoot Connectors article.

Azure Databricks

Mistake code: 3200

-

Bulletin: Fault 403.

-

Cause:

The Databricks access token has expired. -

Recommendation: By default, the Azure Databricks admission token is valid for ninety days. Create a new token and update the linked service.

Fault code: 3201

-

Message:

Missing required field: settings.job.notebook_task.notebook_path. -

Cause:

Bad authoring: Notebook path non specified correctly. -

Recommendation: Specify the notebook path in the Databricks activeness.

-

Bulletin:

Cluster... does not exist. -

Cause:

Authoring fault: Databricks cluster does not exist or has been deleted. -

Recommendation: Verify that the Databricks cluster exists.

-

Bulletin:

Invalid Python file URI... Please visit Databricks user guide for supported URI schemes. -

Crusade:

Bad authoring. -

Recommendation: Specify either accented paths for workspace-addressing schemes, or

dbfs:/folder/subfolder/foo.pyfor files stored in the Databricks File System (DFS).

-

Message:

{0} LinkedService should have domain and accessToken as required properties. -

Cause:

Bad authoring. -

Recommendation: Verify the linked service definition.

-

Message:

{0} LinkedService should specify either existing cluster ID or new cluster information for cosmos. -

Cause:

Bad authoring. -

Recommendation: Verify the linked service definition.

-

Message:

Node blazon Standard_D16S_v3 is not supported. Supported node types: Standard_DS3_v2, Standard_DS4_v2, Standard_DS5_v2, Standard_D8s_v3, Standard_D16s_v3, Standard_D32s_v3, Standard_D64s_v3, Standard_D3_v2, Standard_D8_v3, Standard_D16_v3, Standard_D32_v3, Standard_D64_v3, Standard_D12_v2, Standard_D13_v2, Standard_D14_v2, Standard_D15_v2, Standard_DS12_v2, Standard_DS13_v2, Standard_DS14_v2, Standard_DS15_v2, Standard_E8s_v3, Standard_E16s_v3, Standard_E32s_v3, Standard_E64s_v3, Standard_L4s, Standard_L8s, Standard_L16s, Standard_L32s, Standard_F4s, Standard_F8s, Standard_F16s, Standard_H16, Standard_F4s_v2, Standard_F8s_v2, Standard_F16s_v2, Standard_F32s_v2, Standard_F64s_v2, Standard_F72s_v2, Standard_NC12, Standard_NC24, Standard_NC6s_v3, Standard_NC12s_v3, Standard_NC24s_v3, Standard_L8s_v2, Standard_L16s_v2, Standard_L32s_v2, Standard_L64s_v2, Standard_L80s_v2. -

Cause:

Bad authoring. -

Recommendation: Refer to the error message.

Error code: 3202

-

Message:

There were already 1000 jobs created in past 3600 seconds, exceeding charge per unit limit: 1000 job creations per 3600 seconds. -

Cause:

Too many Databricks runs in an hour. -

Recommendation: Bank check all pipelines that utilise this Databricks workspace for their job creation rate. If pipelines launched as well many Databricks runs in amass, migrate some pipelines to a new workspace.

-

Message:

Could not parse asking object: Expected 'key' and 'value' to be fix for JSON map field base_parameters, got 'key: "..."' instead. -

Cause:

Authoring error: No value provided for the parameter. -

Recommendation: Inspect the pipeline JSON and ensure all parameters in the baseParameters notebook specify a nonempty value.

-

Message:

User:SimpleUserContext{userId=..., name=user@visitor.com, orgId=...}is not authorized to access cluster. -

Cause: The user who generated the access token isn't allowed to access the Databricks cluster specified in the linked service.

-

Recommendation: Ensure the user has the required permissions in the workspace.

-

Message:

Job is not fully initialized notwithstanding. Please retry later. -

Cause: The job has not initialized.

-

Recommendation: Await and effort over again later.

Error code: 3203

-

Message:

The cluster is in Terminated state, non available to receive jobs. Please fix the cluster or retry afterward. -

Cause: The cluster was terminated. For interactive clusters, this issue might be a race condition.

-

Recommendation: To avoid this error, utilize job clusters.

Error code: 3204

-

Message:

Chore execution failed. -

Crusade: Error messages indicate various issues, such as an unexpected cluster state or a specific activity. Often, no error message appears.

-

Recommendation: N/A

Error lawmaking: 3208

-

Message:

An error occurred while sending the request. -

Cause: The network connection to the Databricks service was interrupted.

-

Recommendation: If you lot're using a self-hosted integration runtime, make certain that the network connexion is reliable from the integration runtime nodes. If you're using Azure integration runtime, retry usually works.

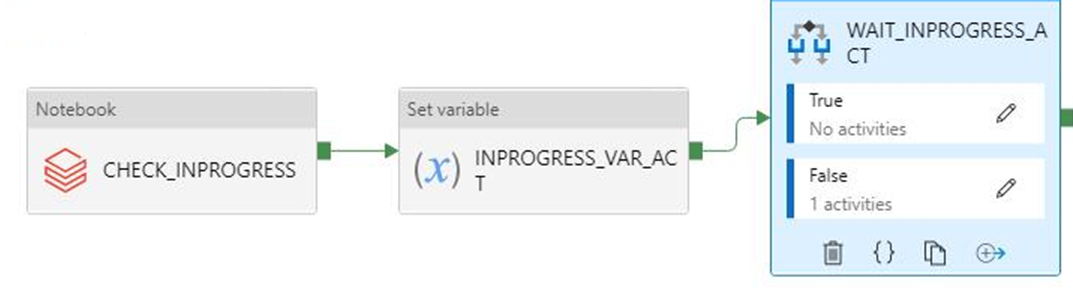

The Boolean run output starts coming as string instead of expected int

-

Symptoms: Your Boolean run output starts coming as string (for example,

"0"or"ane") instead of expected int (for instance,0or1).

You lot noticed this change on September 28, 2021 at effectually 9 AM IST when your pipeline relying on this output started failing. No modify was made on the pipeline, and the Boolean output had been coming as expected before the failure.

-

Crusade: This issue is caused past a recent change, which is by design. After the modify, if the upshot is a number that starts with zero, Azure Data Factory will convert the number to the octal value, which is a issues. This number is ever 0 or 1, which never caused issues earlier the change. Then to set up the octal conversion, the string output is passed from the Notebook run as is.

-

Recommendation: Change the if condition to something like

if(value=="0").

Azure Data Lake Analytics

The following tabular array applies to U-SQL.

Error code: 2709

-

Bulletin:

The access token is from the incorrect tenant. -

Cause: Wrong Azure Active Directory (Azure Advertisement) tenant.

-

Recommendation: Wrong Azure Agile Directory (Azure Advert) tenant.

-

Bulletin:

We cannot accept your job at this moment. The maximum number of queued jobs for your account is 200. -

Cause: This error is caused past throttling on Data Lake Analytics.

-

Recommendation: Reduce the number of submitted jobs to Data Lake Analytics. Either change triggers and concurrency settings on activities, or increase the limits on Information Lake Analytics.

-

Message:

This job was rejected considering it requires 24 AUs. This account'south administrator-defined policy prevents a chore from using more than than 5 AUs. -

Cause: This mistake is caused past throttling on Data Lake Analytics.

-

Recommendation: Reduce the number of submitted jobs to Data Lake Analytics. Either change triggers and concurrency settings on activities, or increase the limits on Data Lake Analytics.

Error code: 2705

-

Bulletin:

Forbidden. ACL verification failed. Either the resource does not exist or the user is non authorized to perform the requested operation.<br/> <br/> User is not able to access Information Lake Store. <br/> <br/> User is not authorized to use Data Lake Analytics. -

Cause: The service primary or document doesn't accept admission to the file in storage.

-

Recommendation: Verify that the service principal or certificate that the user provides for Data Lake Analytics jobs has access to both the Data Lake Analytics account, and the default Information Lake Storage instance from the root folder.

Error lawmaking: 2711

-

Message:

Forbidden. ACL verification failed. Either the resource does not exist or the user is not authorized to perform the requested operation.<br/> <br/> User is not able to access Data Lake Store. <br/> <br/> User is not authorized to employ Information Lake Analytics. -

Cause: The service principal or certificate doesn't have access to the file in storage.

-

Recommendation: Verify that the service main or certificate that the user provides for Information Lake Analytics jobs has admission to both the Data Lake Analytics account, and the default Data Lake Storage instance from the root folder.

-

Message:

Cannot notice the 'Azure Data Lake Store' file or folder. -

Cause: The path to the U-SQL file is incorrect, or the linked service credentials don't have access.

-

Recommendation: Verify the path and credentials provided in the linked service.

Fault code: 2704

-

Message:

Forbidden. ACL verification failed. Either the resource does non be or the user is not authorized to perform the requested operation.<br/> <br/> User is not able to access Data Lake Store. <br/> <br/> User is non authorized to employ Information Lake Analytics. -

Crusade: The service principal or document doesn't have access to the file in storage.

-

Recommendation: Verify that the service principal or certificate that the user provides for Data Lake Analytics jobs has access to both the Data Lake Analytics business relationship, and the default Data Lake Storage instance from the root folder.

Fault code: 2707

-

Message:

Cannot resolve the account of AzureDataLakeAnalytics. Delight cheque 'AccountName' and 'DataLakeAnalyticsUri'. -

Cause: The Data Lake Analytics business relationship in the linked service is incorrect.

-

Recommendation: Verify that the correct business relationship is provided.

Error code: 2703

-

Message:

Mistake Id: E_CQO_SYSTEM_INTERNAL_ERROR (or any error that starts with "Mistake Id:"). -

Cause: The error is from Information Lake Analytics.

-

Recommendation: The job was submitted to Data Lake Analytics, and the script there, both failed. Investigate in Data Lake Analytics. In the portal, get to the Data Lake Analytics business relationship and look for the job by using the Data Manufactory activeness run ID (don't utilize the pipeline run ID). The job there provides more information virtually the mistake, and will help you troubleshoot.

If the resolution isn't clear, contact the Data Lake Analytics back up team and provide the chore Universal Resource Locator (URL), which includes your account name and the task ID.

Azure functions

Fault code: 3602

-

Message:

Invalid HttpMethod: '%method;'. -

Cause: The Httpmethod specified in the activeness payload isn't supported past Azure Function Activity.

-

Recommendation: The supported Httpmethods are: PUT, POST, Get, DELETE, OPTIONS, Head, and TRACE.

Mistake code: 3603

-

Message:

Response Content is not a valid JObject. -

Crusade: The Azure function that was chosen didn't render a JSON Payload in the response. Azure Data Factory and Synapse pipeline Azure function activity only support JSON response content.

-

Recommendation: Update the Azure office to return a valid JSON Payload such as a C# part may return

(ActionResult)new OkObjectResult("{\"Id\":\"123\"}");

Mistake code: 3606

-

Bulletin: Azure function activeness missing part key.

-

Cause: The Azure office action definition isn't complete.

-

Recommendation: Bank check that the input Azure function activity JSON definition has a property named

functionKey.

Error lawmaking: 3607

-

Message:

Azure function activity missing function name. -

Cause: The Azure function activity definition isn't complete.

-

Recommendation: Check that the input Azure function activity JSON definition has a belongings named

functionName.

Mistake code: 3608

-

Message:

Call to provided Azure function '%FunctionName;' failed with status-'%statusCode;' and message - '%message;'. -

Crusade: The Azure function details in the activity definition may be incorrect.

-

Recommendation: Fix the Azure function details and try again.

Error code: 3609

-

Message:

Azure function activity missing functionAppUrl. -

Cause: The Azure role activity definition isn't complete.

-

Recommendation: Check that the input Azure Function activity JSON definition has a property named

functionAppUrl.

Error code: 3610

-

Bulletin:

At that place was an error while calling endpoint. -

Cause: The function URL may be incorrect.

-

Recommendation: Verify that the value for

functionAppUrlin the action JSON is correct and try once again.

Error lawmaking: 3611

-

Message:

Azure office action missing Method in JSON. -

Cause: The Azure function activity definition isn't complete.

-

Recommendation: Check that the input Azure function action JSON definition has a property named

method.

Error code: 3612

-

Message:

Azure function activity missing LinkedService definition in JSON. -

Cause: The Azure function activity definition isn't consummate.

-

Recommendation: Check that the input Azure part activity JSON definition has linked service details.

Azure Machine Learning

Error code: 4101

-

Bulletin:

AzureMLExecutePipeline activity '%activityName;' has invalid value for property '%propertyName;'. -

Cause: Bad format or missing definition of property

%propertyName;. -

Recommendation: Bank check if the action

%activityName;has the property%propertyName;divers with right data.

Mistake code: 4110

-

Message:

AzureMLExecutePipeline activity missing LinkedService definition in JSON. -

Cause: The AzureMLExecutePipeline activeness definition isn't complete.

-

Recommendation: Cheque that the input AzureMLExecutePipeline activity JSON definition has correctly linked service details.

Error lawmaking: 4111

-

Message:

AzureMLExecutePipeline activity has wrong LinkedService type in JSON. Expected LinkedService type: '%expectedLinkedServiceType;', current LinkedService type: Expected LinkedService type: '%currentLinkedServiceType;'. -

Cause: Wrong activity definition.

-

Recommendation: Check that the input AzureMLExecutePipeline action JSON definition has correctly linked service details.

Fault lawmaking: 4112

-

Message:

AzureMLService linked service has invalid value for property '%propertyName;'. -

Cause: Bad format or missing definition of holding '%propertyName;'.

-

Recommendation: Bank check if the linked service has the property

%propertyName;defined with correct data.

Error code: 4121

-

Message:

Request sent to Azure Auto Learning for performance '%performance;' failed with http status code '%statusCode;'. Fault message from Azure Machine Learning: '%externalMessage;'. -

Cause: The Credential used to access Azure Machine Learning has expired.

-

Recommendation: Verify that the credential is valid and retry.

Mistake code: 4122

-

Message:

Asking sent to Azure Machine Learning for operation '%operation;' failed with http condition code '%statusCode;'. Fault message from Azure Auto Learning: '%externalMessage;'. -

Crusade: The credential provided in Azure Machine Learning Linked Service is invalid, or doesn't have permission for the operation.

-

Recommendation: Verify that the credential in Linked Service is valid, and has permission to access Azure Machine Learning.

Error code: 4123

-

Message:

Request sent to Azure Machine Learning for performance '%operation;' failed with http status code '%statusCode;'. Error message from Azure Machine Learning: '%externalMessage;'. -

Cause: The properties of the activity such every bit

pipelineParametersare invalid for the Azure Car Learning (ML) pipeline. -

Recommendation: Check that the value of activeness backdrop matches the expected payload of the published Azure ML pipeline specified in Linked Service.

Fault code: 4124

-

Message:

Request sent to Azure Machine Learning for operation '%operation;' failed with http status lawmaking '%statusCode;'. Error message from Azure Machine Learning: '%externalMessage;'. -

Cause: The published Azure ML pipeline endpoint doesn't exist.

-

Recommendation: Verify that the published Azure Machine Learning pipeline endpoint specified in Linked Service exists in Azure Automobile Learning.

Fault code: 4125

-

Bulletin:

Asking sent to Azure Machine Learning for operation '%functioning;' failed with http condition lawmaking '%statusCode;'. Error message from Azure Machine Learning: '%externalMessage;'. -

Crusade: In that location is a server fault on Azure Automobile Learning.

-

Recommendation: Retry later. Contact the Azure Automobile Learning squad for help if the issue continues.

Fault code: 4126

-

Message:

Azure ML pipeline run failed with status: '%amlPipelineRunStatus;'. Azure ML pipeline run Id: '%amlPipelineRunId;'. Please check in Azure Machine Learning for more error logs. -

Crusade: The Azure ML pipeline run failed.

-

Recommendation: Check Azure Machine Learning for more error logs, then ready the ML pipeline.

Common

Error code: 2103

-

Bulletin:

Please provide value for the required property '%propertyName;'. -

Cause: The required value for the belongings has not been provided.

-

Recommendation: Provide the value from the message and try over again.

Error lawmaking: 2104

-

Bulletin:

The type of the property '%propertyName;' is incorrect. -

Crusade: The provided property blazon isn't correct.

-

Recommendation: Prepare the type of the belongings and try again.

Error code: 2105

-

Message:

An invalid json is provided for property '%propertyName;'. Encountered an error while trying to parse: '%message;'. -

Cause: The value for the belongings is invalid or isn't in the expected format.

-

Recommendation: Refer to the documentation for the property and verify that the value provided includes the right format and type.

Mistake code: 2106

-

Bulletin:

The storage connection string is invalid. %errorMessage; -

Crusade: The connection string for the storage is invalid or has incorrect format.

-

Recommendation: Become to the Azure portal and notice your storage, and then copy-and-paste the connection string into your linked service and try again.

Fault code: 2110

-

Message:

The linked service blazon '%linkedServiceType;' is not supported for '%executorType;' activities. -

Crusade: The linked service specified in the activity is incorrect.

-

Recommendation: Verify that the linked service type is i of the supported types for the activity. For example, the linked service type for HDI activities tin be HDInsight or HDInsightOnDemand.

Error code: 2111

-

Message:

The type of the belongings '%propertyName;' is incorrect. The expected blazon is %expectedType;. -

Cause: The type of the provided property isn't correct.

-

Recommendation: Set the property type and try again.

Error code: 2112

-

Bulletin:

The cloud type is unsupported or could not be adamant for storage from the EndpointSuffix '%endpointSuffix;'. -

Cause: The cloud blazon is unsupported or couldn't be determined for storage from the EndpointSuffix.

-

Recommendation: Use storage in another cloud and try again.

Custom

The following table applies to Azure Batch.

Error code: 2500

-

Message:

Hit unexpected exception and execution failed. -

Cause:

Can't launch command, or the plan returned an error lawmaking. -

Recommendation: Ensure that the executable file exists. If the program started, verify that stdout.txt and stderr.txt were uploaded to the storage business relationship. Information technology's a expert practice to include logs in your lawmaking for debugging.

Error code: 2501

-

Bulletin:

Cannot access user batch account; please check batch account settings. -

Cause: Wrong Batch admission key or puddle proper noun.

-

Recommendation: Verify the pool name and the Batch access key in the linked service.

Error code: 2502

-

Message:

Cannot access user storage account; please check storage account settings. -

Cause: Incorrect storage business relationship name or access primal.

-

Recommendation: Verify the storage account name and the access key in the linked service.

Mistake lawmaking: 2504

-

Message:

Operation returned an invalid condition code 'BadRequest'. -

Crusade: As well many files in the

folderPathof the custom activity. The full size ofresourceFilescan't be more than 32,768 characters. -

Recommendation: Remove unnecessary files, or Zip them and add together an unzip command to extract them.

For example, use

powershell.exe -nologo -noprofile -command "& { Add-Type -A 'System.IO.Compression.FileSystem'; [IO.Compression.ZipFile]::ExtractToDirectory($zipFile, $folder); }" ; $binder\yourProgram.exe

Error code: 2505

-

Message:

Cannot create Shared Access Signature unless Account Key credentials are used. -

Cause: Custom activities support just storage accounts that utilize an access key.

-

Recommendation: Refer to the mistake clarification.

Mistake code: 2507

-

Message:

The folder path does not exist or is empty: ... -

Crusade: No files are in the storage account at the specified path.

-

Recommendation: The folder path must contain the executable files you want to run.

Error code: 2508

-

Message:

There are indistinguishable files in the resources folder. -

Cause: Multiple files of the same name are in unlike subfolders of folderPath.

-

Recommendation: Custom activities flatten folder structure under folderPath. If you need to preserve the folder structure, zip the files and extract them in Azure Batch by using an unzip command.

For example, utilize

powershell.exe -nologo -noprofile -control "& { Add-Type -A 'System.IO.Compression.FileSystem'; [IO.Pinch.ZipFile]::ExtractToDirectory($zipFile, $folder); }" ; $folder\yourProgram.exe

Mistake code: 2509

-

Message:

Batch url ... is invalid; it must exist in Uri format. -

Cause: Batch URLs must be like to

https://mybatchaccount.eastus.batch.azure.com -

Recommendation: Refer to the error clarification.

Error lawmaking: 2510

-

Message:

An error occurred while sending the request. -

Cause: The batch URL is invalid.

-

Recommendation: Verify the batch URL.

HDInsight

Error code: 206

-

Message:

The batch ID for Spark job is invalid. Please retry your chore. -

Cause: There was an internal trouble with the service that caused this error.

-

Recommendation: This outcome could be transient. Retry your task after sometime.

Error code: 207

-

Message:

Could not determine the region from the provided storage business relationship. Please effort using another primary storage account for the on demand HDI. -

Cause: In that location was an internal error while trying to decide the region from the primary storage business relationship.

-

Recommendation: Effort another storage.

Mistake code: 208

-

Message:

Service Master or the MSI authenticator are not instantiated. Please consider providing a Service Primary in the HDI on demand linked service which has permissions to create an HDInsight cluster in the provided subscription and try once again. -

Cause: There was an internal mistake while trying to read the Service Main or instantiating the MSI authentication.

-

Recommendation: Consider providing a service principal, which has permissions to create an HDInsight cluster in the provided subscription and endeavour again. Verify that the Manage Identities are ready correctly.

Mistake lawmaking: 2300

-

Message:

Failed to submit the job '%jobId;' to the cluster '%cluster;'. Error: %errorMessage;. -

Cause: The error message contains a message similar to

The remote proper name could not exist resolved.. The provided cluster URI might be invalid. -

Recommendation: Verify that the cluster hasn't been deleted, and that the provided URI is correct. When you lot open the URI in a browser, y'all should come across the Ambari UI. If the cluster is in a virtual network, the URI should be the private URI. To open up it, use a Virtual Machine (VM) that is part of the same virtual network.

For more information, encounter Directly connect to Apache Hadoop services.

-

Cause: If the fault message contains a message like to

A chore was canceled., the task submission timed out. -

Recommendation: The problem could be either general HDInsight connectivity or network connectivity. First confirm that the HDInsight Ambari UI is bachelor from whatever browser. Then bank check that your credentials are yet valid.

If you're using a self-hosted integrated runtime (IR), perform this stride from the VM or motorcar where the self-hosted IR is installed. Then try submitting the job again.

For more information, read Ambari Web UI.

-

Cause: When the mistake message contains a message similar to

User admin is locked out in AmbariorUnauthorized: Ambari user name or password is incorrect, the credentials for HDInsight are incorrect or have expired. -

Recommendation: Correct the credentials and redeploy the linked service. First verify that the credentials piece of work on HDInsight by opening the cluster URI on whatever browser and trying to sign in. If the credentials don't work, you can reset them from the Azure portal.

For ESP cluster, reset the password through cocky service password reset.

-

Crusade: When the error bulletin contains a message similar to

502 - Web server received an invalid response while interim as a gateway or proxy server, this fault is returned by HDInsight service. -

Recommendation: A 502 error often occurs when your Ambari Server process was shut down. You tin can restart the Ambari Services by rebooting the head node.

-

Connect to one of your nodes on HDInsight using SSH.

-

Identify your active head node host by running

ping headnodehost. -

Connect to your active head node every bit Ambari Server sits on the active head node using SSH.

-

Reboot the active head node.

For more than information, look through the Azure HDInsight troubleshooting documentation. For example:

- Ambari UI 502 error

- RpcTimeoutException for Apache Spark austerity server

- Troubleshooting bad gateway errors in Application Gateway.

-

-

Crusade: When the error message contains a message similar to

Unable to service the submit job request as templeton service is busy with as well many submit task requestsorQueue root.joblauncher already has 500 applications, cannot have submission of application, as well many jobs are existence submitted to HDInsight at the aforementioned fourth dimension. -

Recommendation: Limit the number of concurrent jobs submitted to HDInsight. Refer to action concurrency if the jobs are being submitted by the aforementioned activity. Change the triggers and so the concurrent pipeline runs are spread out over time.

Refer to HDInsight documentation to conform

templeton.parallellism.chore.submitas the fault suggests.

Fault lawmaking: 2301

-

Bulletin:

Could not get the status of the application '%physicalJobId;' from the HDInsight service. Received the post-obit mistake: %message;. Delight refer to HDInsight troubleshooting documentation or contact their support for further assist. -

Cause: HDInsight cluster or service has issues.

-

Recommendation: This error occurs when the service doesn't receive a response from HDInsight cluster when attempting to request the status of the running job. This outcome might be on the cluster itself, or HDInsight service might have an outage.

Refer to HDInsight troubleshooting documentation, or contact Microsoft back up for further assistance.

Error code: 2302

-

Message:

Hadoop job failed with exit code '%exitCode;'. See '%logPath;/stderr' for more than details. Alternatively, open up the Ambari UI on the HDI cluster and find the logs for the job '%jobId;'. Contact HDInsight team for farther support. -

Crusade: The job was submitted to the HDI cluster and failed there.

-

Recommendation:

- Bank check Ambari UI:

- Ensure that all services are notwithstanding running.

- From Ambari UI, check the warning section in your dashboard.

- For more information on alerts and resolutions to alerts, see Managing and Monitoring a Cluster.

- Review your YARN retention. If your YARN retention is loftier, the processing of your jobs may be delayed. If you do not have plenty resources to conform your Spark application/job, scale upwardly the cluster to ensure the cluster has enough memory and cores.

- Run a Sample test job.

- If you run the same task on HDInsight backend, check that it succeeded. For examples of sample runs, meet Run the MapReduce examples included in HDInsight

- If the chore however failed on HDInsight, check the application logs and information, which to provide to Support:

- Check whether the job was submitted to YARN. If the job wasn't submitted to yarn, employ

--chief yarn. - If the application finished execution, collect the kickoff time and stop time of the YARN Application. If the application didn't consummate the execution, collect Start time/Launch time.

- Cheque and collect application log with

yarn logs -applicationId <Insert_Your_Application_ID>. - Check and collect the yarn Resources Managing director logs under the

/var/log/hadoop-yarn/yarndirectory. - If these steps are not plenty to resolve the issue, contact Azure HDInsight team for back up and provide the to a higher place logs and timestamps.

- Check whether the job was submitted to YARN. If the job wasn't submitted to yarn, employ

Error code: 2303

-

Message:

Hadoop job failed with transient exit code '%exitCode;'. Run into '%logPath;/stderr' for more details. Alternatively, open the Ambari UI on the HDI cluster and find the logs for the job '%jobId;'. Endeavor once again or contact HDInsight squad for further support. -

Cause: The job was submitted to the HDI cluster and failed there.

-

Recommendation:

- Check Ambari UI:

- Ensure that all services are notwithstanding running.

- From Ambari UI, check the warning department in your dashboard.

- For more than information on alerts and resolutions to alerts, come across Managing and Monitoring a Cluster.

- Review your YARN memory. If your YARN retention is high, the processing of your jobs may be delayed. If y'all practise not have enough resources to arrange your Spark awarding/chore, scale up the cluster to ensure the cluster has enough retentivity and cores.

- Run a Sample test job.

- If you run the same job on HDInsight backend, bank check that information technology succeeded. For examples of sample runs, come across Run the MapReduce examples included in HDInsight

- If the job nonetheless failed on HDInsight, bank check the application logs and information, which to provide to Support:

- Check whether the job was submitted to YARN. If the chore wasn't submitted to yarn, use

--master yarn. - If the application finished execution, collect the start time and terminate time of the YARN Application. If the application didn't complete the execution, collect Start fourth dimension/Launch time.

- Check and collect application log with

yarn logs -applicationId <Insert_Your_Application_ID>. - Check and collect the yarn Resources Manager logs nether the

/var/log/hadoop-yarn/yarndirectory. - If these steps are not enough to resolve the outcome, contact Azure HDInsight team for back up and provide the above logs and timestamps.

- Check whether the job was submitted to YARN. If the chore wasn't submitted to yarn, use

Error lawmaking: 2304

-

Message:

MSI hallmark is not supported on storages for HDI activities. -

Cause: The storage linked services used in the HDInsight (HDI) linked service or HDI activity, are configured with an MSI authentication that isn't supported.

-

Recommendation: Provide full connectedness strings for storage accounts used in the HDI linked service or HDI activeness.

Fault code: 2305

-

Message:

Failed to initialize the HDInsight customer for the cluster '%cluster;'. Fault: '%message;' -

Cause: The connection information for the HDI cluster is incorrect, the provided user doesn't have permissions to perform the required activeness, or the HDInsight service has bug responding to requests from the service.

-

Recommendation: Verify that the user information is correct, and that the Ambari UI for the HDI cluster can be opened in a browser from the VM where the IR is installed (for a cocky-hosted IR), or can exist opened from any machine (for Azure IR).

Error lawmaking: 2306

-

Message:

An invalid json is provided for script action '%scriptActionName;'. Mistake: '%message;' -

Crusade: The JSON provided for the script activity is invalid.

-

Recommendation: The error message should help to identify the issue. Fix the json configuration and try once more.

Check Azure HDInsight on-demand linked service for more than data.

Error code: 2310

-

Bulletin:

Failed to submit Spark chore. Fault: '%message;' -

Cause: The service tried to create a batch on a Spark cluster using Livy API (livy/batch), merely received an mistake.

-

Recommendation: Follow the error message to fix the upshot. If there isn't enough data to become it resolved, contact the HDI squad and provide them the batch ID and job ID, which can be found in the activity run Output in the service Monitoring page. To troubleshoot further, collect the total log of the batch task.

For more data on how to collect the full log, run into Get the total log of a batch job.

Error lawmaking: 2312

-

Message:

Spark job failed, batch id:%batchId;. Please follow the links in the activity run Output from the service Monitoring page to troubleshoot the run on HDInsight Spark cluster. Please contact HDInsight support team for further assistance. -

Cause: The job failed on the HDInsight Spark cluster.

-

Recommendation: Follow the links in the action run Output in the service Monitoring folio to troubleshoot the run on HDInsight Spark cluster. Contact HDInsight support team for further assistance.

For more information on how to collect the total log, see Get the full log of a batch job.

Error code: 2313

-

Bulletin:

The batch with ID '%batchId;' was not establish on Spark cluster. Open up the Spark History UI and effort to find it there. Contact HDInsight support for further help. -

Cause: The batch was deleted on the HDInsight Spark cluster.

-

Recommendation: Troubleshoot batches on the HDInsight Spark cluster. Contact HDInsight support for further assistance.

For more information on how to collect the full log, see Go the full log of a batch job, and share the full log with HDInsight back up for further assistance.

Error code: 2328

-

Bulletin:

Failed to create the on demand HDI cluster. Cluster or linked service proper noun: '%clusterName;', error: '%message;' -

Cause: The error message should testify the details of what went wrong.

-

Recommendation: The error message should assistance to troubleshoot the issue.

Mistake code: 2329

-

Message:

Failed to delete the on demand HDI cluster. Cluster or linked service proper noun: '%clusterName;', mistake: '%message;' -

Cause: The error message should show the details of what went wrong.

-

Recommendation: The mistake message should aid to troubleshoot the issue.

Error code: 2331

-

Message:

The file path should not be null or empty. -

Cause: The provided file path is empty.

-

Recommendation: Provide a path for a file that exists.

Fault code: 2340

-

Message:

HDInsightOnDemand linked service does not support execution via SelfHosted IR. Your IR name is '%IRName;'. Please select an Azure IR instead. -

Cause: The HDInsightOnDemand linked service doesn't back up execution via SelfHosted IR.

-

Recommendation: Select an Azure IR and try once more.

Error lawmaking: 2341

-

Message:

HDInsight cluster URL '%clusterUrl;' is wrong, it must be in URI format and the scheme must be 'https'. -

Cause: The provided URL isn't in correct format.

-

Recommendation: Fix the cluster URL and endeavor again.

Error code: 2342

-

Message:

Failed to connect to HDInsight cluster: '%errorMessage;'. -

Cause: Either the provided credentials are wrong for the cluster, or in that location was a network configuration or connection issue, or the IR is having problems connecting to the cluster.

-

Recommendation:

-

Verify that the credentials are correct by opening the HDInsight cluster'southward Ambari UI in a browser.

-

If the cluster is in Virtual Network (VNet) and a self-hosted IR is being used, the HDI URL must be the private URL in VNets, and should have

-intlisted afterward the cluster proper noun.For instance, change

https://mycluster.azurehdinsight.net/tohttps://mycluster-int.azurehdinsight.cyberspace/. Note the-intaftermycluster, but before.azurehdinsight.net -

If the cluster is in VNet, the self-hosted IR is existence used, and the private URL was used, and however the connectedness even so failed, then the VM where the IR is installed had problems connecting to the HDI.

Connect to the VM where the IR is installed and open up the Ambari UI in a browser. Utilize the private URL for the cluster. This connexion should work from the browser. If it doesn't, contact HDInsight support team for farther assistance.

-

If self-hosted IR isn't being used, so the HDI cluster should be accessible publicly. Open the Ambari UI in a browser and check that it opens up. If at that place are any issues with the cluster or the services on it, contact HDInsight back up squad for assistance.

The HDI cluster URL used in the linked service must be accessible for the IR (cocky-hosted or Azure) in gild for the test connection to pass, and for runs to work. This country tin can be verified by opening the URL from a browser either from VM, or from any public machine.

-

Fault code: 2343

-

Bulletin:

User name and countersign cannot be null or empty to connect to the HDInsight cluster. -

Crusade: Either the user name or the password is empty.

-

Recommendation: Provide the correct credentials to connect to HDI and endeavor over again.

Fault lawmaking: 2345

-

Message:

Failed to read the content of the hive script. Error: '%message;' -

Cause: The script file doesn't exist or the service couldn't connect to the location of the script.

-

Recommendation: Verify that the script exists, and that the associated linked service has the proper credentials for a connexion.

Error code: 2346

-

Message:

Failed to create ODBC connection to the HDI cluster with error message '%message;'. -

Cause: The service tried to establish an Open Database Connectivity (ODBC) connexion to the HDI cluster, and it failed with an mistake.

-

Recommendation:

- Confirm that you correctly ready your ODBC/Coffee Database Connectivity (JDBC) connectedness.

- For JDBC, if you're using the same virtual network, you tin get this connectedness from:

Hive -> Summary -> HIVESERVER2 JDBC URL - To ensure that yous have the correct JDBC fix, run into Query Apache Hive through the JDBC commuter in HDInsight.

- For Open up Database (ODB), see Tutorial: Query Apache Hive with ODBC and PowerShell to ensure that yous have the correct setup.

- For JDBC, if you're using the same virtual network, you tin get this connectedness from:

- Verify that Hiveserver2, Hive Metastore, and Hiveserver2 Interactive are active and working.

- Bank check the Ambari user interface (UI):

- Ensure that all services are nevertheless running.

- From the Ambari UI, check the alert section in your dashboard.

- For more data on alerts and resolutions to alerts, see Managing and Monitoring a Cluster .

- If these steps are not enough to resolve the issue, contact the Azure HDInsight team.

- Confirm that you correctly ready your ODBC/Coffee Database Connectivity (JDBC) connectedness.

Fault code: 2347

-

Message:

Hive execution through ODBC failed with error message '%message;'. -

Crusade: The service submitted the hive script for execution to the HDI cluster via ODBC connection, and the script has failed on HDI.

-

Recommendation:

- Confirm that y'all correctly set up your ODBC/Coffee Database Connectivity (JDBC) connectedness.

- For JDBC, if you lot're using the same virtual network, yous can go this connectedness from:

Hive -> Summary -> HIVESERVER2 JDBC URL - To ensure that you have the correct JDBC gear up, see Query Apache Hive through the JDBC driver in HDInsight.

- For Open Database (ODB), see Tutorial: Query Apache Hive with ODBC and PowerShell to ensure that you have the right setup.

- For JDBC, if you lot're using the same virtual network, yous can go this connectedness from:

- Verify that Hiveserver2, Hive Metastore, and Hiveserver2 Interactive are active and working.

- Check the Ambari user interface (UI):

- Ensure that all services are yet running.

- From the Ambari UI, check the warning department in your dashboard.

- For more information on alerts and resolutions to alerts, run into Managing and Monitoring a Cluster .

- If these steps are non enough to resolve the consequence, contact the Azure HDInsight team.

- Confirm that y'all correctly set up your ODBC/Coffee Database Connectivity (JDBC) connectedness.

Error code: 2348

-

Message:

The main storage has not been initialized. Please check the properties of the storage linked service in the HDI linked service. -

Cause: The storage linked service backdrop are not set up correctly.

-

Recommendation: Only full connection strings are supported in the main storage linked service for HDI activities. Verify that you are not using MSI authorizations or applications.

Mistake code: 2350

-

Message:

Failed to prepare the files for the run '%jobId;'. HDI cluster: '%cluster;', Error: '%errorMessage;' -

Cause: The credentials provided to connect to the storage where the files should be located are incorrect, or the files do not exist at that place.

-

Recommendation: This error occurs when the service prepares for HDI activities, and tries to copy files to the primary storage before submitting the job to HDI. Check that files exist in the provided location, and that the storage connectedness is correct. Equally HDI activities do not support MSI hallmark on storage accounts related to HDI activities, verify that those linked services have full keys or are using Azure Key Vault.

Error lawmaking: 2351

-

Bulletin:

Could not open the file '%filePath;' in container/fileSystem '%container;'. -

Cause: The file doesn't exist at specified path.

-

Recommendation: Check whether the file actually exists, and that the linked service with connection info pointing to this file has the correct credentials.

Error code: 2352

-

Message:

The file storage has not been initialized. Please cheque the backdrop of the file storage linked service in the HDI activity. -

Crusade: The file storage linked service properties are not set correctly.

-

Recommendation: Verify that the properties of the file storage linked service are properly configured.

Error code: 2353

-

Bulletin:

The script storage has not been initialized. Please cheque the properties of the script storage linked service in the HDI activity. -

Cause: The script storage linked service properties are not gear up correctly.

-

Recommendation: Verify that the properties of the script storage linked service are properly configured.

Fault code: 2354

-

Message:

The storage linked service type '%linkedServiceType;' is not supported for '%executorType;' activities for belongings '%linkedServicePropertyName;'. -

Crusade: The storage linked service type isn't supported past the activity.

-

Recommendation: Verify that the selected linked service has one of the supported types for the activity. HDI activities back up AzureBlobStorage and AzureBlobFSStorage linked services.

For more information, read Compare storage options for utilise with Azure HDInsight clusters

Error lawmaking: 2355

-

Bulletin:

The '%value' provided for commandEnvironment is incorrect. The expected value should be an assortment of strings where each string has the format CmdEnvVarName=CmdEnvVarValue. -

Crusade: The provided value for

commandEnvironmentis wrong. -

Recommendation: Verify that the provided value is similar to:

\"variableName=variableValue\" ]Besides verify that each variable appears in the list only once.

Mistake lawmaking: 2356

-

Message:

The commandEnvironment already contains a variable named '%variableName;'. -

Crusade: The provided value for

commandEnvironmentis incorrect. -

Recommendation: Verify that the provided value is similar to:

\"variableName=variableValue\" ]Besides verify that each variable appears in the listing simply in one case.

Fault code: 2357

-

Bulletin:

The certificate or password is wrong for ADLS Gen 1 storage. -

Crusade: The provided credentials are wrong.

-

Recommendation: Verify that the connexion information in ADLS Gen 1 linked to the service, and verify that the test connection succeeds.

Fault code: 2358

-

Message:

The value '%value;' for the required property 'TimeToLive' in the on demand HDInsight linked service '%linkedServiceName;' has invalid format. Information technology should be a timespan between '00:05:00' and '24:00:00'. -

Cause: The provided value for the required property

TimeToLivehas an invalid format. -

Recommendation: Update the value to the suggested range and effort again.

Error code: 2359

-

Message:

The value '%value;' for the property 'roles' is invalid. Expected types are 'zookeeper', 'headnode', and 'workernode'. -

Crusade: The provided value for the property

rolesis invalid. -

Recommendation: Update the value to be one of the suggestions and attempt again.

Error code: 2360

-

Message:

The connection string in HCatalogLinkedService is invalid. Encountered an mistake while trying to parse: '%bulletin;'. -

Crusade: The provided connection string for the

HCatalogLinkedServiceis invalid. -

Recommendation: Update the value to a right Azure SQL connection string and try again.

Error code: 2361

-

Message:

Failed to create on need HDI cluster. Cluster name is '%clusterName;'. -

Cause: The cluster cosmos failed, and the service did non get an fault back from HDInsight service.

-

Recommendation: Open up the Azure portal and try to find the HDI resource with provided name, so check the provisioning status. Contact HDInsight support team for further assist.

Error code: 2362

-

Bulletin:

Only Azure Blob storage accounts are supported every bit additional storages for HDInsight on demand linked service. -

Cause: The provided additional storage was not Azure Blob storage.

-

Recommendation: Provide an Azure Blob storage account equally an additional storage for HDInsight on-demand linked service.

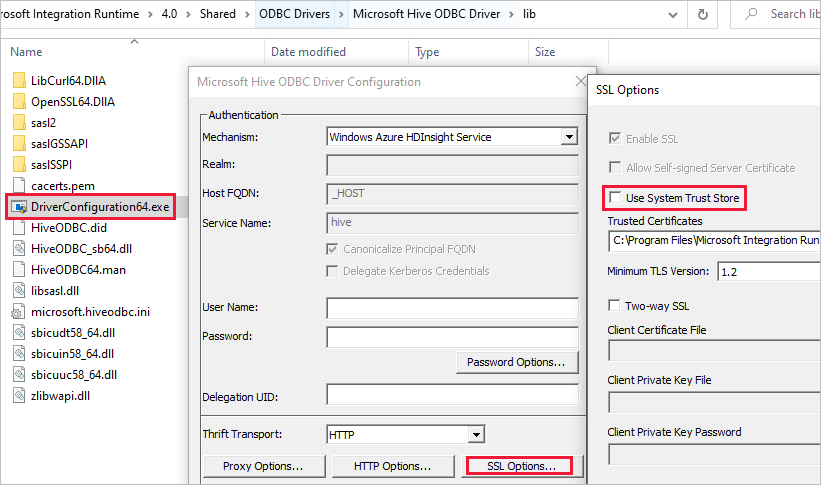

SSL error when linked service using HDInsight ESP cluster

-

Message:

Failed to connect to HDInsight cluster: 'ERROR [HY000] [Microsoft][DriverSupport] (1100) SSL document verification failed considering the certificate is missing or incorrect. -

Cause: The event is most likely related with System Trust Shop.

-

Resolution: You tin can navigate to the path Microsoft Integration Runtime\4.0\Shared\ODBC Drivers\Microsoft Hive ODBC Driver\lib and open DriverConfiguration64.exe to change the setting.

HDI action stuck in preparing for cluster

If the HDI activity is stuck in preparing for cluster, follow the guidelines below:

-

Brand sure the timeout is greater than what is described below and await for the execution to complete or until it is timed out, and wait for Time To Live (TTL) time before submitting new jobs.

The max default time that it takes to spin up a cluster is two hours, and if you have whatsoever init script, it will add upwardly, up to some other 2 hours.

-

Make certain the storage and HDI are provisioned in the same region.

-

Make certain that the service chief used for accessing the HDI cluster is valid.

-

If the issue still persists, equally a workaround, delete the HDI linked service and re-create it with a new name.

Web Activity

Error code: 2128

-

Message:

No response from the endpoint. Possible causes: network connectivity, DNS failure, server certificate validation or timeout. -

Cause: This issue is due to either Network connectivity, a DNS failure, a server certificate validation, or a timeout.

-

Recommendation: Validate that the endpoint you are trying to hit is responding to requests. You may use tools similar Fiddler/Postman/Netmon/Wireshark.

Error code: 2108

-

Message:

Fault calling the endpoint '%url;'. Response status code: '%code;' -

Cause: The asking failed due to an underlying issue such as network connectivity, a DNS failure, a server certificate validation, or a timeout.

-

Recommendation: Utilize Fiddler/Postman/Netmon/Wireshark to validate the request.

More than details

To use Fiddler to create an HTTP session of the monitored web application:

-

Download, install, and open up Fiddler.

-

If your web awarding uses HTTPS, go to Tools > Fiddler Options > HTTPS.

-

In the HTTPS tab, select both Capture HTTPS CONNECTs and Decrypt HTTPS traffic.

-

-

If your application uses TLS/SSL certificates, add together the Fiddler document to your device.

Become to: Tools > Fiddler Options > HTTPS > Actions > Export Root Certificate to Desktop.

-

Turn off capturing by going to File > Capture Traffic. Or press F12.

-

Clear your browser's cache and so that all cached items are removed and must be downloaded again.

-

Create a request:

-

Select the Composer tab.

-

Prepare the HTTP method and URL.

-

If needed, add together headers and a asking body.

-

Select Execute.

-

-

Plough on traffic capturing again, and complete the problematic transaction on your page.

-

Go to: File > Relieve > All Sessions.

For more than information, encounter Getting started with Fiddler.

Full general

REST continuation token Nada error

Error message: {"token":null,"range":{"min":..}

Cause: When querying across multiple partitions/pages, backend service returns continuation token in JObject format with 3 backdrop: token, min and max key ranges, for example, {"token":null,"range":{"min":"05C1E9AB0DAD76","max":"05C1E9CD673398"}}). Depending on source data, querying can result 0 indicating missing token though there is more than data to fetch.

Recommendation: When the continuationToken is non-zero, as the string {"token":zippo,"range":{"min":"05C1E9AB0DAD76","max":"05C1E9CD673398"}}, it is required to call queryActivityRuns API again with the continuation token from the previous response. You need to pass the full cord for the query API again. The activities will be returned in the subsequent pages for the query consequence. Yous should ignore that there is empty array in this page, as long as the full continuationToken value != zip, you need proceed querying. For more than details, please refer to Balance api for pipeline run query.

Activity stuck issue

When you observe that the activity is running much longer than your normal runs with barely no progress, it may happen to exist stuck. You lot can try canceling it and retry to run across if it helps. If it's a copy activity, you can learn about the performance monitoring and troubleshooting from Troubleshoot copy activity performance; if it's a data flow, acquire from Mapping data flows functioning and tuning guide.

Payload is also large

Fault message: The payload including configurations on activeness/dataSet/linked service is besides big. Delight check if y'all accept settings with very large value and try to reduce its size.

Crusade: The payload for each activity run includes the action configuration, the associated dataset(s), and linked service(south) configurations if any, and a small-scale portion of organisation backdrop generated per activity type. The limit of such payload size is 896 KB as mentioned in the Azure limits documentation for Data Factory and Azure Synapse Analytics.

Recommendation: You hit this limit likely considering you pass in ane or more large parameter values from either upstream activity output or external, especially if you pass actual information across activities in command flow. Check if you can reduce the size of large parameter values, or tune your pipeline logic to avert passing such values beyond activities and handle it within the activity instead.

Unsupported compression causes files to exist corrupted

Symptoms: Yous effort to unzip a file that is stored in a blob container. A unmarried copy action in a pipeline has a source with the pinch blazon set to "deflate64" (or whatever unsupported type). This activity runs successfully and produces the text file contained in the zip file. Yet, in that location is a problem with the text in the file, and this file appears corrupted. When this file is unzipped locally, information technology is fine.

Cause: Your zip file is compressed by the algorithm of "deflate64", while the internal zip library of Azure Data Factory merely supports "deflate". If the zip file is compressed by the Windows system and the overall file size exceeds a certain number, Windows volition utilize "deflate64" by default, which is not supported in Azure Information Factory. On the other hand, if the file size is smaller or you use some 3rd party zip tools that support specifying the shrink algorithm, Windows volition use "deflate" by default.

Next steps

For more than troubleshooting help, effort these resource:

- Information Manufactory weblog

- Data Factory characteristic requests

- Stack Overflow forum for Data Mill

- Twitter data well-nigh Data Manufacturing plant

- Azure videos

- Microsoft Q&A question page

Feedback

Submit and view feedback for

Source: https://docs.microsoft.com/en-us/azure/data-factory/data-factory-troubleshoot-guide

0 Response to "Storage Not Found NfsFile28 Please Try Again Gitlab"

Post a Comment